You are not a horse

AI and the future of labor demand

There’s a popular argument that AI will do to human workers what tractors did to horses. Tractors could do what horses did. Horses became obsolete. AI can do what humans do. Therefore...

And every major AI builder seems to agree that humans are next. Musk says AI will “replace all jobs.” Amodei is out there talking all the time about everyone losing their jobs, grounded his framing of AI as “a general labor substitute.” OpenAI investors are out there talking about “80% of all jobs by 2030.”

While these are important people in the space, not some random blogger, they may not exactly be a random sampling of the most knowledgeable people. But it’s certainly not a new fear, and not just an AI thing. Wassily Leontief of input-output fame (more on input-output in a minute) had a few pieces in the early 1980s expressing this worry.

If AI really is a perfect substitute for human labor, any cost advantage drives to 100% AI. You don’t need an essay to prove it. But “AI will eventually be a perfect substitute” is doing all the work.

But that’s hiding a lot, lots of margins of adjustment and differences, heterogeneity that makes the world the world and not a simple model. How substitutable is AI right now? What would it take for that to rise high enough? What else has to hold?

Even the historical example that “tractors could do what horses did, therefore horses became obsolete” sounds like one step, but it’s actually several. And “AI can do what humans do, therefore humans become obsolete” is hiding even more.

So let’s go through those steps. This newsletter is based on a new working paper that goes through the math and economics, really MOSTLY basic accounting, in detail.

What collapse of human labor actually means

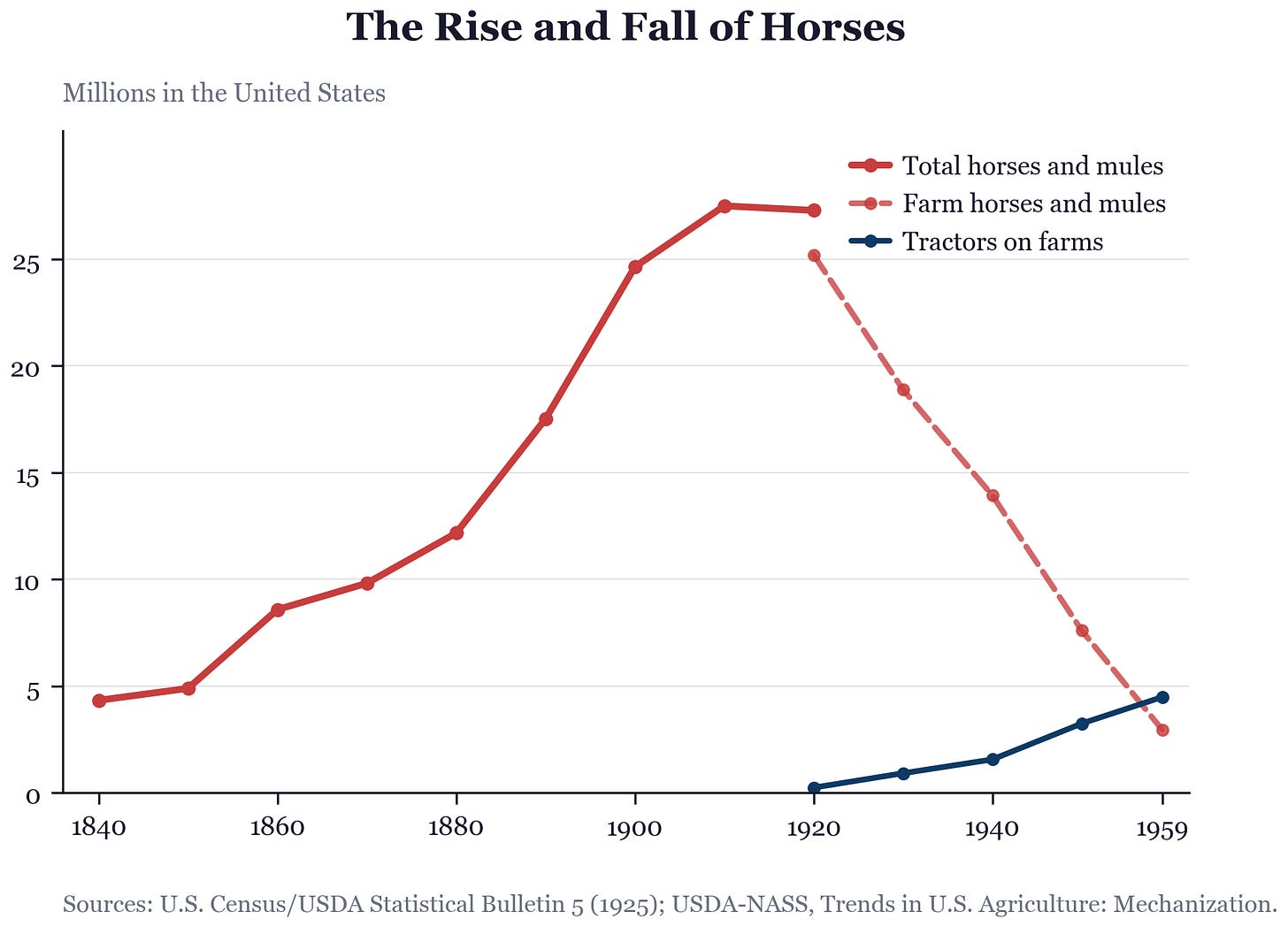

For a quick recap for those unaware of the history of horses in the US, the US horse population rose from 4.3 million in 1840 to 27.3 million in 1920. Then we get the fall with farm horses and mules dropping to roughly 3 million by 1960.

Horses basically had one job that came and went. For humans, we need to be more careful. Let’s make sure we have our accounting right and be clear about what a collapse actually means.

For simplicity, suppose human labor demand goes to zero. Not low. Zero. What does that require? It means no dollar you spend, anywhere in the economy, passes through a human hand at any point in its supply chain. Not the person who made the thing. Not the person who shipped it. Not the person who designed it, sold it, maintained it, or cleaned the building where it was assembled. Zero human labor embodied in final expenditure. That’s the target. That’s what I’m going to take “humans become horses” to mean, stated precisely.

This is the input-output idea Leontief built his career on. You can trace any final purchase back through its supply chain and add up all the human labor that went into it, direct and indirect. A cup of coffee has the barista, but also the roaster, the trucker, the farmer, the person who made the truck. “Embodied labor” means all of it. For labor demand to collapse, every one of those links has to go to zero, in every product anyone buys

The economy is not one production function. It is many activities. When AI makes some of them cheaper, people don’t just buy more of the same thing. They buy something else.

Every dollar you spend lands somewhere. Some dollars land in activities with lots of human labor inside them: a restaurant, a therapist, a roofer. Some land in activities with almost none: a streaming subscription, an automated checkout, cloud storage. So when we are tracing out what happens when AI gets cheaper, it’s not just “Can AI do my job?” It is “When everyone saves money because AI did my job cheaper, what do they buy next?”

Aggregate labor demand depends on three things: how much people spend in total, how much of that spending lands on activities with human labor inside them, and how much labor is embodied in each of those activities. For human labor demand to collapse, it’s not enough for AI to displace workers inside some activities. Every dollar of spending, wherever it lands, must lose all its embodied human labor. That’s three channels, and the horse argument needs all three to go wrong simultaneously.

The important starting point for thinking about labor is te idea that nobody wants labor. A restaurant doesn’t want waiters; it wants orders taken, customers reassured, mistakes corrected. So labor demand is derived demand. How does AI change how much firms demand?

When AI can do the things firms are actually buying, cheaper AI does two things at once. Firms substitute AI for workers, which reduces labor demand per unit of output. But cheaper AI also lowers output prices, output expands, and the expansion pulls labor demand back up. Whether labor demand rises or falls depends on which effect is larger. This is the Hicks-Marshall decomposition of derived demand into substitution and scale effects.

This is going to be the organizing principle for everything. When a dollar is saved, where is it redirected? To new tasks? To new jobs? To new sectors? It must go somewhere.

“AI can do the tasks.”

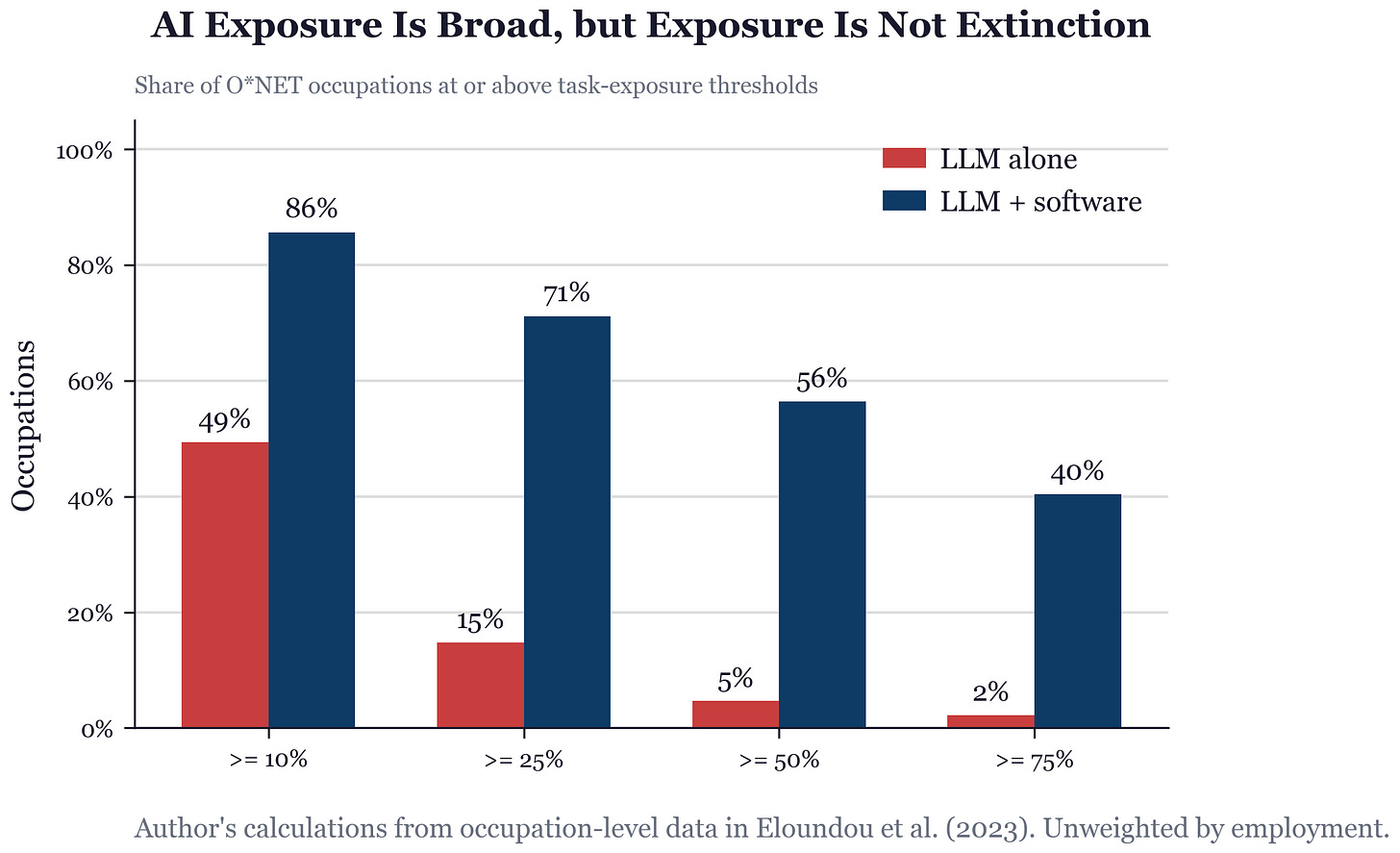

This is obviously true for many things. Early models had this even. For example, the early GPT exposure paper by Eloundou, Manning, Mishkin, and Rock estimated that roughly 80% of the U.S. workforce could have at least 10% of tasks affected by LLMs. With complementary software, 86% of occupations cross the 10% exposure threshold.

And lots has been done on this. The task-level evidence backs this up. In a large customer-support setting, access to generative AI raised issues resolved per hour by about 15%. In a professional writing experiment, ChatGPT reduced average task time by 40% and raised measured output quality by 18%. In a controlled GitHub Copilot experiment, developers completed a coding task 55.8% faster. These aren’t tiny effects.

But they’re effects on tasks. The saved dollar doesn’t vanish when a task gets automated. It creates new tasks within the same job, such as more review, more client management, more judgment calls. Just as there’s not some fixed amount of demand so the scale effects matter, there is not some fixed job.

“A job is more than a task list.”

There’s a ritual in AI discourse where someone posts a demo, the demo does a task associated with a job, and people conclude the job is doomed. Sometimes they’re right. But the inference skips about fifteen steps. What does it actually cost to deploy, errors included? Do customers trust it? Does management know how to reorganize around it? A chatbot demo can appear overnight. A hospital reorganizing clinical liability around AI cannot.

We need to think not just about jobs but organizations. Often the result is a team, not a replacement. A human-AI pair produces output. But complementarity is not free. A pair that produces only slightly more than the AI alone doesn’t justify the human wage. The human has to add something the AI can’t replicate cheaply.

Surgery, aviation, structural engineering, fiduciary advice, for legal reasons alone are areas where we can expect the damage from an error dwarfs the savings from cheaper production. Again, that can always change one day but not soon. When failure on one component destroys the value of all others, you don’t care about the sticker price. That’s the O-Ring logic. You care about cost per unit that actually works. When damage stakes are high enough, human-supervised production wins regardless of how cheap AI becomes.

“Fine, the current jobs go. Where does the spending go?”

Suppose substitution wins inside most jobs. The saved dollar escapes the workplace entirely. Where does it go? Most standard models aggregate into a single final good, so this question plays no role. The real economy has many sectors, and the dollar has to land somewhere.

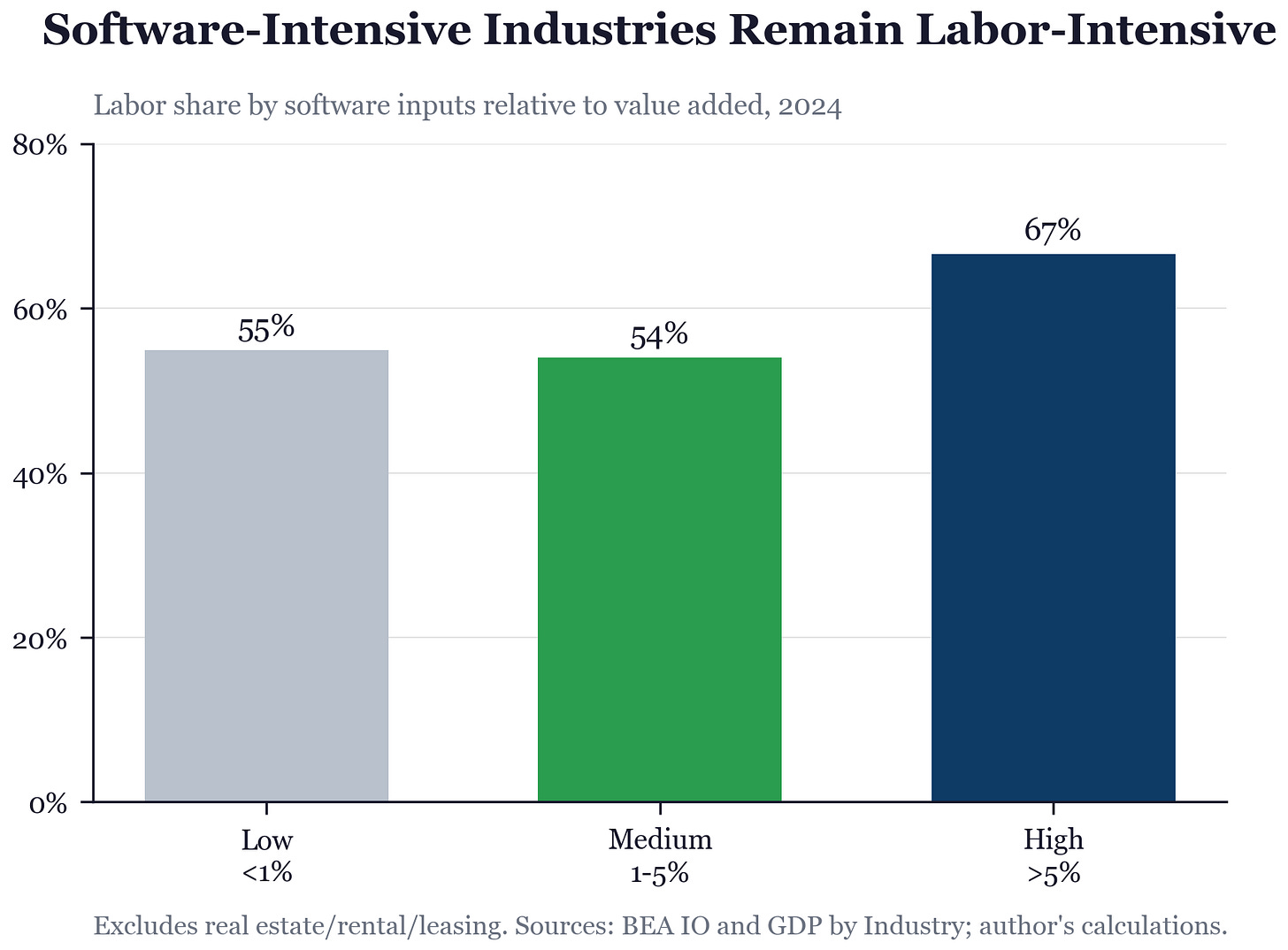

Start with software as a microcosm. This is a sector that has already been heavily automated by digital inputs for decades. If substitution were going to drive labor out of a sector, this is where you’d see it first. The chart below groups industries by how much software they purchase relative to their value added — low, medium, and high software intensity. What do you see?

The most software-intensive industries don’t just retain human labor;they have a higher labor share (67%) than the least software-intensive ones (55%). Heavy digital inputs didn’t drive out human labor. If anything, the industries that automated the most are the ones that spend the most on workers. BLS projects U.S. employment to increase by 5.2 million from 2024 to 2034. Software-developer employment? Up 17.9%, despite direct AI exposure.

The scale effect won within the sector most exposed to digital automation. The BLS could be completely off but the evidence so far points strongly toward the scale effect dominating in software-intense industries.

Software is one extreme but we basically have the same pattern holds across the whole economy, over a much longer period.

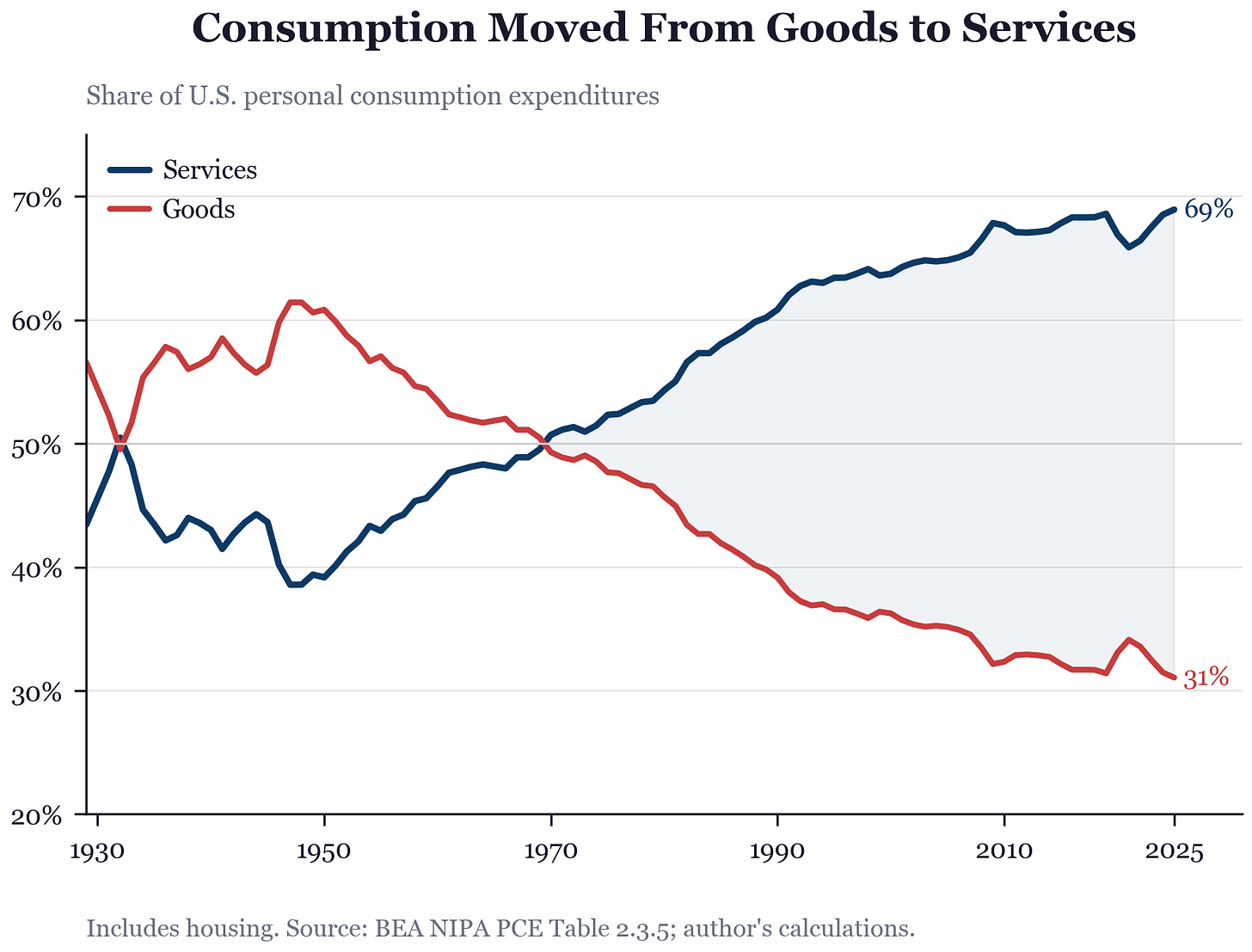

For another angle on the problem, let’s go bigger and look across the biggest sectors in the economy: services vs. goods. In 1929, most consumer spending went to physical goods. Today, roughly two-thirds goes to services. As manufacturing got cheaper, people didn’t just buy more stuff. They shifted spending toward healthcare, education, restaurants, personal services. That’s the saved dollar in action at a more not-quite macro but close level — the savings from cheaper goods flowed toward services.

In terms of our guiding decomposition, coods got cheaper. I’m going to be a bit fast and loose here but the scale effect didn’t show up in goods. Demand for physical stuff didn’t explode. Instead those freed-up dollars migrated to services, and the scale effect showed up there. The substitution effect won inside goods-producing industries. The scale effect won across sectors. Output overall expanded. So if you’re thinking as a macroeconomist, the scale effect dominated.

But migration alone doesn’t help workers unless the destination still has human labor inside it. Did it?

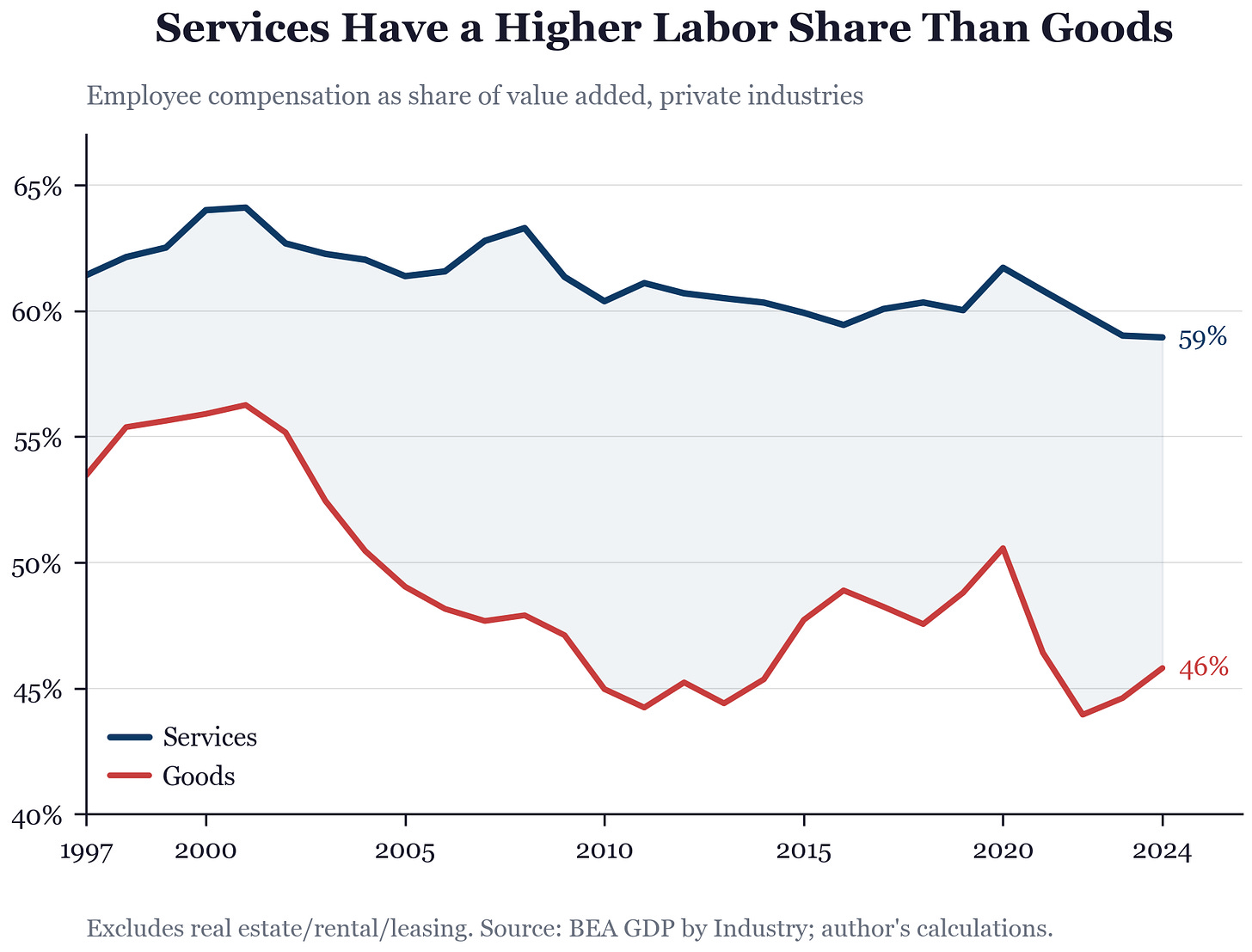

This chart tracks what fraction of each sector’s value goes to workers — employee compensation as a share of value added. Services consistently pay a higher share to labor than goods-producing industries. Spending didn’t just migrate. It migrated toward sectors where more of each dollar ends up in someone’s paycheck.

Again, so far, yes. We are moving toward services.

So you say sure, this actually supports the horse outcome. The price of goods fell and we bought fewer goods. What I’m saying is that there is a margin of adjustment, there is an escape hatch when you are looking at an economy as diverse as the modern U.S. economy.

And comparative advantage always pops up fighting against this. When automation makes some things cheap, the things that remain expensive tend to be the things that are hard to automate. And the things that are hard to automate are, almost by definition, the things where humans still have comparative advantage. The saved dollar drifts toward where humans are still worth paying. That’s not optimism. That’s what comparative advantage means.

Bessen showed this sector by sector. In early textiles, power looms cut labor per yard of cloth. But cloth got so cheap that demand exploded, and total employment in textiles rose for decades. Same in early steel, early autos. Eventually demand saturated, prices stopped falling fast enough, and automation reduced employment in each sector. The question for AI isn’t “does automation destroy jobs?” It’s “which phase are we in, for which sectors?”

Where might the AI-saved dollar land today? Healthcare is already 18% of GDP and rising. Elder care will grow as populations age.

Mokyr, Vickers, and Ziebarth have a great JEP piece that makes the historical case for why this time isn’t different: new tasks appeared, comparative advantage held, products we couldn't imagine created new work.

Horses had no equivalent destination.

“Fine, spending chases automation.”

The saved dollar found human-intensive sectors last time. The best argument for why this time is different comes from Philip Trammell’s “Is labor a luxury in the long run?“ His answer is probably not. Even if people initially spend more on human services as they get richer—live music, handmade goods, personal care—four forces erode that over time. AI-produced variety keeps expanding, competing for every dollar that lands on human-made goods. Consuming human services has an opportunity cost: time spent at a live concert is time not spent on a superior AI experience.

Other scarce goods—beachfront land, status goods, R&D—compete with labor for the “scarce thing people pay a premium for” slot. And capital goods keep getting cheaper to produce, so the investment share of spending can grow without limit.

Trammell’s Coca-Cola analogy is the sharpest version. Original Coke held 50% of the soda market. Then Diet Coke, Cherry Coke, Pepsi Max, energy drinks, sparkling water. Even with brand loyalty and supply restrictions, the share fell below 20%. If AI keeps inventing new varieties of goods that compete with human-produced ones, even a strong initial preference for human labor gets diluted by expanding choice.

I take this seriously. It’s a possible scenario.But notice what it requires. Not just that AI-produced variety expands (which it will) but that it expands fast enough and broadly enough to pull spending away from every human-intensive category at once. The question isn’t whether AI competes with some human goods. It’s whether any human-intensive island survives. Does anyone still spend money on something with a person inside it?

The numbers still have to be extreme. Suppose AI eats 85% of the economy. Software, accounting, law, medicine, logistics, most management, most media. All gone or nearly gone as human labor categories. Suppose the remaining 15% of spending goes to things with at least 30% human labor inside them. Elder care, in-person education, surgery, live performance, skilled trades, therapy, status goods. Then the aggregate human labor share is at least

S ≥ 0.15 × 0.30 = 0.045

That may not sound great but I’m literatlly just putting a bound. Knowing nothing else, we can sustain this. Not large. Not utopia. But not zero, and that’s the absolute lowest possible bound. And remember, labor share declining is not the same thing if the pie is growing much larger.

But is it just sentimentality to think spending stays on human-intensive stuff? Alex Imas argues no. As AI makes commodities cheap, real incomes rise, and richer people systematically shift spending toward what he calls “relational” goods.

There's a huge literature in economics on structural change, the long-run pattern where spending shifts from agriculture to manufacturing to services as countries get richer. The big question is why. Is it because prices change and people buy more of whatever got cheaper? Or is it because incomes rise and people just want different stuff? Comin, Lashkari, and Mestieri, for example, decompose the two and find that income effects account for over 75% of the shift. That matters here. If spending migration were mostly about chasing cheap goods, AI making things cheaper would pull dollars toward AI-produced stuff. But it's mostly about what richer people want. And richer people have consistently wanted more services with humans in them.

In experiments, when subjects learn that others will be excluded from purchasing an identical product, willingness to pay roughly doubles. Pure exclusivity premium. Anonymous, no status signaling possible. The premium is stronger for human-made goods. Human-created artwork gains 44% in value from exclusivity, versus 21% for AI-generated artwork. AI-made goods feel copyable. Human-made goods feel scarce even when they aren’t. People want what other people can’t have. That wanting doesn’t run out, and it sticks to things a person made.

Maybe the point is to wait long enough and AI variety erodes even that. Maybe. But the structural change evidence says income effects dominate price effects by three to one. When basic needs get cheaper, humans don’t say “good, I’m done wanting.” They invent new ways to compare themselves with neighbors. Whether the new wants land on human-made goods or AI-made goods is the open question, and the experimental evidence so far favors humans.

A falling labor share is not falling labor demand. There is a range where labor’s share of income is declining but total labor demand is still rising, because the pie is growing faster than labor’s slice is shrinking. That range may be where we are right now. It would look like “AI is taking over” in share terms while employment keeps growing. The popular argument runs these together and they are not the same claim.

We already see that. Higher income people consume more services. Services tend to be high labor share. Again, that can always flip in the future but this is the evidence we have.

I think humans have a shot

Going through all of the layers, from tasks (where we are just starting to see some substitution) all the way up to the macroeconomy, leaves me pretty skeptical of the horse outcome. I know I’ve hidden it really well up to this point, but that’s where I’m at.

AI will do many tasks. It will reorganize jobs, probably painfully. Some sectors will lose most of their human labor. Spending can chase automation. All of that can happen and still not get to zero. Because at every step, there’s a saved dollar looking for somewhere to land. And the question is always the same one. Where does it go next?

For the horse outcome, you need that saved dollar to find nothing with a human attached to it.

That’s a very specific future. It might happen. But it has to happen everywhere at once, and the evidence we have, the structural change evidence, the revealed preferences, the experimental results, keeps pointing the other way.